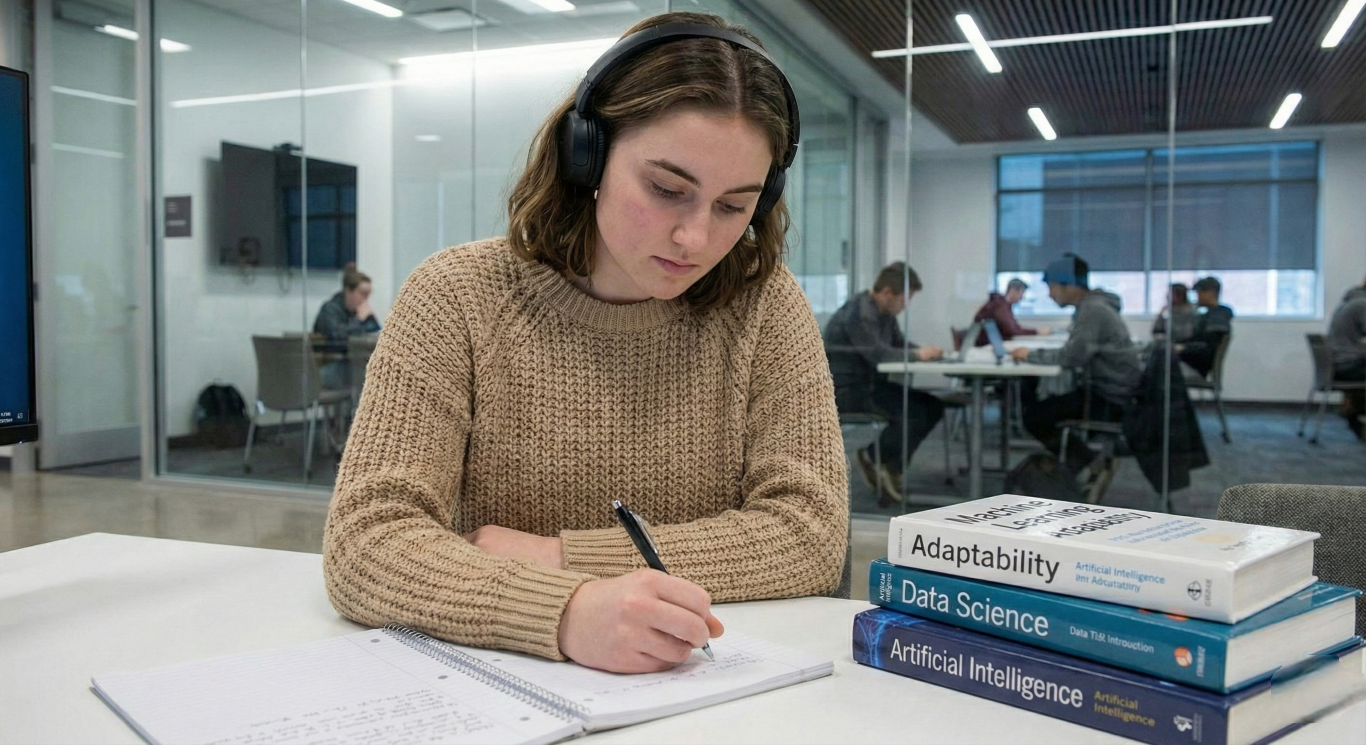

Imagine a weapon that can spot a threat, calculate danger, and fire — all in the time it takes you to blink. That’s not science fiction. AI-assisted targeting is already embedded in real military systems around the world, and the technology is advancing faster than the laws meant to govern it. Most people have no idea this is already happening. This article explains exactly how it works, who is building it, and why the world is still struggling to write the rules.

Key Takeaways

- AI-assisted military targeting is already active in real-world defense systems today.

- Autonomous missile interceptor systems can detect a threat and respond without a human pressing a button.

- No binding international law exists yet that specifically bans or regulates autonomous weapons.

- Over 120 countries support a treaty, but several major military powers continue to resist formal binding negotiations.

What Is an Autonomous Weapon System?

An autonomous weapon system is any weapon that can find a target, make a decision about it, and take action — all without a human giving a direct order in that moment.

Think of it like a security alarm that doesn’t just alert you to a burglar. Instead, it locks the door, calls the police, and detains the intruder — all by itself, before you even wake up.

The Spectrum of Human Control

Not all AI weapons work the same way. There’s a spectrum of how much a human is involved:

- Human-in-the-loop: A human makes every firing decision. The AI only assists by providing information.

- Human-on-the-loop: The AI acts on its own, but a human can override or stop it in real time.

- Human-out-of-the-loop: The system operates fully independently once activated, with no real-time human oversight.

Most public debate focuses on that last category — systems where, once turned on, a machine decides who or what gets targeted.

What’s the Difference Between Automated and Autonomous?

This is a key distinction. An automated system follows fixed rules — like a robot on a factory floor that always repeats the same task. An autonomous system uses AI to make judgments in new situations it was never specifically programmed for.

In warfare, that difference is enormous. An autonomous weapon doesn’t just follow a script. It learns, adapts, and decides.

Related: AI Automation Explained: When Software Learns to Think for Itself

How AI Targeting Actually Works Right Now

You don’t need to imagine a future robot soldier. The technology is already deployed — just not always in the form people picture.

Project Maven: The US Military’s AI Eyes

Since 2017, the US military has been running Project Maven, a program that uses AI to analyze enormous amounts of drone footage and satellite imagery. The AI identifies objects of interest — vehicles, people, patterns of movement — far faster than human analysts ever could.

What used to take hours of human review can now happen in minutes. Military leaders have credited Maven with significantly reducing the time it takes to identify and act on targets.

More recently, generative AI — the same kind of technology that powers ChatGPT — is being integrated as an additional layer, enabling military planners to interact with the system conversationally. Humans still make the final call in this setup, but the AI is doing the heavy lifting of finding, sorting, and recommending.

Autonomous Missile Interceptor Systems: Machines That Fire Themselves

One of the clearest real-world examples of autonomous engagement already in use is a category of weapons known as autonomous air defense systems — missile interceptors that detect, assess, and respond to incoming threats entirely on their own.

Here’s how they work in simple terms:

- Radar detects an incoming rocket or missile

- The system calculates where it is headed

- If it’s on course for a protected area, the system launches an interceptor missile

- The threat is destroyed mid-air

Critically, this happens in seconds — far faster than any human could react. The system doesn’t wait for someone to press a button. Once activated, it decides and fires on its own.

This is considered a defensive autonomous system, which is why it has attracted less controversy than offensive weapons. But it illustrates that the line between “AI that assists humans” and “AI that makes the call itself” has already been crossed.

Related: Why Scientists Just Moved the Doomsday Clock to an All-Time Record

Drones With AI Brains

Modern combat drones are another frontier. In the Russia-Ukraine conflict, both sides have been using AI-assisted drone technology at scale.

AI has boosted the accuracy of certain drone strike systems dramatically compared to manually operated versions. Some drones are now designed to operate even when communication signals are jammed — because they don’t need to check in with a human operator. The AI onboard makes the targeting decision independently.

The Legal Gap: No Country Has a Binding Law

Here is the central problem: no international treaty specifically prohibiting or regulating autonomous weapons systems currently exists.

Why Hasn’t the World Agreed on Rules?

International discussions have been happening since 2014 under the Convention on Certain Conventional Weapons (CCW) — a UN framework that deals with weapons that can cause unnecessary harm. Year after year, countries have met in Geneva to debate the issue.

Year after year, no binding agreement has emerged.

The primary obstacle is that several major military powers — including the United States, Russia, Israel, India, and others — have repeatedly blocked proposals to begin formal treaty negotiations. These are, not coincidentally, some of the same countries investing most heavily in developing these systems.

What Has Actually Been Agreed?

In late 2025, 164 countries voted in favor of a UN General Assembly resolution calling for a comprehensive approach to autonomous weapons — with only 6 countries voting against. But a resolution is not a law. It’s a statement of concern. It creates no obligations and sets no limits.

The UN Secretary-General has repeatedly called for a legally binding treaty to be concluded by 2026. Most legal experts acknowledge that target will not be met.

The Accountability Problem

One of the deepest concerns isn’t just about stopping bad actors. It’s about who is responsible when something goes wrong.

If an AI weapon kills the wrong person — a civilian, a misidentified target — who is held accountable? The soldier who activated the system? The company that built the algorithm? The government that deployed it? Currently, there is no clear legal answer to this question.

The Arguments on Both Sides

Here’s a fair summary of both positions.

Arguments for AI in Warfare

- Speed: AI systems can react in milliseconds. In missile defense, that speed saves lives.

- Reduced risk to soldiers: Autonomous systems can operate in environments too dangerous for humans.

- Fewer emotional errors: Machines don’t act out of fear, exhaustion, or anger — factors that have historically contributed to civilian casualties.

- Precision: Well-trained AI targeting may be more accurate than human operators in certain controlled scenarios.

Arguments Against Autonomous Weapons

- Errors cascade: An AI trained on flawed data can misidentify targets at scale — and do so repeatedly before anyone notices.

- No accountability: It is currently impossible to hold a machine responsible under international law.

- Bias risk: AI systems can pick up biases from training data, potentially targeting people based on patterns related to race, gender, or movement behavior rather than actual threat.

- Escalation risk: Research has found that the speed of autonomous systems can trigger rapid, unintended escalation between opposing forces — a conflict that might spiral beyond human control before humans can intervene.

- Proliferation danger: Unlike nuclear weapons, autonomous drones are cheap to build and easy to scale. Once the technology spreads, it may reach non-state actors, criminal organizations, or authoritarian governments.

What Happens Next?

The technology is advancing faster than diplomacy. Here is where things stand:

- The US military has made AI a central pillar of its strategy, with Pentagon AI spending in the billions annually.

- Russia, China, Israel, South Korea, India, and others are all actively developing autonomous systems.

- Over 120 countries have called for a treaty on autonomous weapons — but formal negotiations have not yet begun as of early 2026.

- The UN Secretary-General’s 2026 deadline for a legally binding instrument is expected to be missed.

The window for preventative action — setting rules before the technology becomes so embedded in military infrastructure that rules become unenforceable — is narrowing.

Conclusion

AI targeting systems are not a future threat — they are a present reality. From missile defense platforms that fire without human input, to AI tools that sort and prioritize battlefield targets in seconds, the technology is operational. What’s lagging behind is governance. More than 120 countries want binding rules, but the countries with the most powerful systems keep blocking formal negotiations. The fundamental question — whether a machine should ever be allowed to decide to take a human life — remains unanswered in international law. And the clock is running.

Frequently Asked Questions

Are there any AI weapons that already fire without human approval?

Yes. Defensive autonomous air defense systems — which detect and intercept incoming missiles — can respond to threats without requiring a human to press fire for each interception. Offensive autonomous engagement, targeting human beings without real-time human control, is more contested but has reportedly occurred in conflict zones.

What is the difference between AI-assisted targeting and a fully autonomous weapon?

AI-assisted targeting means the AI finds and recommends targets, but a human makes the final decision to fire. A fully autonomous weapon means the machine both selects and engages targets without any human input during the engagement itself. Most current systems sit somewhere between these two extremes.

Can existing laws like the Geneva Conventions apply to autonomous weapons?

Existing international humanitarian law — including the Geneva Conventions — does apply broadly, requiring that attacks distinguish between combatants and civilians, and avoid disproportionate harm. However, these laws were written for human soldiers and do not specifically address how to assign responsibility when an algorithm makes a lethal mistake.

Why don’t countries just agree to ban autonomous weapons?

The main obstacle is that the countries developing the most advanced autonomous systems — including the US, Russia, and China — have strategic incentives not to limit their own capabilities. In international arms control, binding agreements require the cooperation of the most powerful actors, and that cooperation has not yet materialized for autonomous weapons.